Jackson rail line gets latest tech in Norfolk Southern’s efforts to improve safety

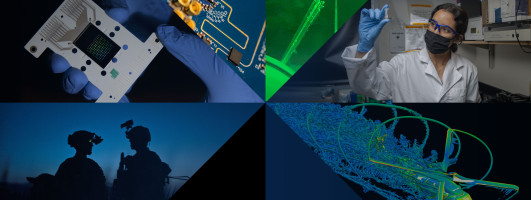

...Now, Jackson is the latest place outside of Ohio to get new technology that’s part of a nationwide safety project out of Norfolk Southern.

The technology was built in partnership with Georgia Tech’s Research Institute.

Everytime a train goes through this point on the Jackson rail line between Atlanta and Macon, 38 cameras mounted on an arced station light up, making 1,000 images above, around and below the train.